Photo by Markus Spiske on Unsplash

Big O notation is a fundamental concept in programming and algorithm analysis. It helps to express the efficiency and performance of algorithms.

In this context, an algorithm means the number of simple operations or steps the computer takes to accomplish a certain task.

For instance, an algorithm for a function that takes two numbers and returns their sum would look like this in JavaScript:

function sum(a, b) {

return a + b

}

In this example, Big O Notation quantifies the runtime or space requirements for the sum operation to be accomplished.

Therefore, understanding Big O notation is akin to having a roadmap for evaluating the scalability and efficiency of algorithms.

It enables developers to decide which algorithm best fits a specific task.

The Basics of Big O Notation

At its core, Big O notation is a mathematical notation that characterizes the upper bound or worst-case scenario of the time complexity of an algorithm.

It allows programmers to assess how an algorithm's execution time increases as the input size grows towards infinity.

Big O Notation is represented as O(f(x)), where 'f(x)' denotes a mathematical function that describes the algorithm's performance.

It is a standardized approach that ensures solutions are functional, scalable and efficient in the long run.

1. Mathematical Foundation of Big O Notation

This helps to describe the limiting behaviour of a function by quantifying how the runtime or space requirements of an algorithm grow as the input size approaches infinity.

It provides a concise way to express this behaviour using mathematical notation.

Common Big O notations include:

- O(1) - Constant time

- O(n) - Linear time

- O(n²) - Quadratic time

- O(n log n) - Linearithmic time

Understanding the limiting behaviour of functions is fundamental in algorithmic analysis.

It allows us to compare and contrast different algorithms, helping us choose the most efficient one for a given task.

2. Time Complexity and Auxiliary Space Complexity

These are two important fundamental concepts in algorithm analysis.

a. Time Complexity

Time complexity quantifies the amount of time an algorithm takes to run as the size of the input increases.

It abstracts away constant factors and lower-order terms, focusing on the dominant behaviour. In essence, it provides a high-level view of how an algorithm scales.

As the input size (n) increases, some algorithms perform significantly better than others.

For instance, an algorithm with a linear time complexity (O(n)) will experience a linear increase in runtime as the input size grows.

On the other hand, an algorithm with constant time complexity (O(1)) will maintain a consistent runtime, regardless of input size.

b. Auxiliary Space Complexity

Space complexity quantifies how much additional memory we need to allocate to run the code in an algorithm.

Auxiliary Space Complexity considers the space required by the algorithm, not including space taken up by the inputs.

Interpreting Big O Notation

Let's look into some common notations and their implications.

Understand that "n" is the input size, representing the quantity of data the algorithm processes.

1. O(1) - Constant Time Complexity

Description: This signifies constant time complexity; the execution time is independent of the input size.

Illustration in JavaScript Object:

- Accessing an element is O(1)

- Insertion of an element is O(1)

- Deletion of element is O(1)

- Using hasOwnProperty method is O(1)

Illustration in JavaScript Array:

- Accessing an element is O(1)

- Using the pop method on Array is O(1)

- Using the push method on Array is O(1)

Example:

// O(1) - Constant time operations

function getFirstElement(arr) {

return arr[0] // Always takes the same time regardless of array size

}

function addToEnd(arr, element) {

arr.push(element) // Always takes the same time

}

Interpretation:

O(1) means the execution time for the algorithm remains constant regardless of the input size. That is, "n" doesn't affect the execution time.

2. O(n) - Linear Time Complexity

Description: Linear time complexity denotes that the execution time grows linearly with the input size. As the input increases, so does the time taken.

Illustration in JavaScript Object:

- Searching for an element is O(n)

- Using keys method is O(n)

- Using values method is O(n)

- Using entries method is O(n)

Illustration in JavaScript Array:

- Searching for an element is O(n)

- Using the shift method on Array is O(n)

- Using the unshift method on Array is O(n)

Example:

// O(n) - Linear time operations

function findElement(arr, target) {

for (let i = 0; i < arr.length; i++) {

if (arr[i] === target) {

return i

}

}

return -1

}

function sumArray(arr) {

let sum = 0

for (let i = 0; i < arr.length; i++) {

sum += arr[i]

}

return sum

}

Interpretation:

O(n) means the execution time grows proportionally with "n".

Quick note on insertion and deletion in an array:

Insertion and Deletion in an array could be constant O(1) or linear O(n), depending on the index of the target item.

3. O(n²) - Quadratic Time Complexity

Description: Quadratic time complexity implies that the execution time is proportional to the square of the input size. It's often associated with nested loops.

Example:

// O(n²) - Quadratic time complexity

function bubbleSort(arr) {

const n = arr.length

for (let i = 0; i < n - 1; i++) {

for (let j = 0; j < n - i - 1; j++) {

if (arr[j] > arr[j + 1]) {

// Swap elements

;[arr[j], arr[j + 1]] = [arr[j + 1], arr[j]]

}

}

}

return arr

}

function findPairs(arr) {

const pairs = []

for (let i = 0; i < arr.length; i++) {

for (let j = i + 1; j < arr.length; j++) {

pairs.push([arr[i], arr[j]])

}

}

return pairs

}

Illustration:

Performing a bubble sort on an array.

Interpretation:

O(n²) means it's proportional to the square of "n" to give a quadratic relationship.

4. O(n log n) - Linearithmic Time Complexity

Description: This indicates an algorithm with a time complexity between linear and quadratic. It commonly arises in algorithms that divide the input data in each step, like quicksort.

Example:

// O(n log n) - Linearithmic time complexity

function mergeSort(arr) {

if (arr.length <= 1) {

return arr

}

const mid = Math.floor(arr.length / 2)

const left = mergeSort(arr.slice(0, mid))

const right = mergeSort(arr.slice(mid))

return merge(left, right)

}

function merge(left, right) {

let result = []

let leftIndex = 0

let rightIndex = 0

while (leftIndex < left.length && rightIndex < right.length) {

if (left[leftIndex] < right[rightIndex]) {

result.push(left[leftIndex])

leftIndex++

} else {

result.push(right[rightIndex])

rightIndex++

}

}

return result.concat(left.slice(leftIndex)).concat(right.slice(rightIndex))

}

Illustration:

Quicksort and Mergesort are widely used sorting algorithms with O(n log n) average time complexity.

Interpretation:

O(n log n) demonstrates a logarithmic relationship combined with linear growth.

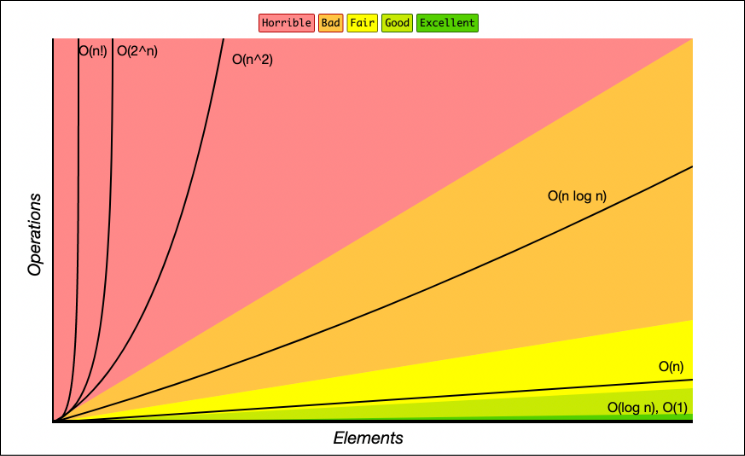

Big O Complexity Comparison

Here's a visual representation of how different complexities compare:

| Complexity | Name | Example Operations |

|---|---|---|

| O(1) | Constant | Array access, Hash table lookup |

| O(log n) | Logarithmic | Binary search |

| O(n) | Linear | Linear search, Array traversal |

| O(n log n) | Linearithmic | Merge sort, Quick sort |

| O(n²) | Quadratic | Bubble sort, Nested loops |

| O(2ⁿ) | Exponential | Recursive Fibonacci |

Practical Tips for Algorithm Analysis

1. Focus on the Worst Case

Big O notation typically describes the worst-case scenario, helping you understand the maximum time or space your algorithm might need.

2. Drop Constants and Lower-Order Terms

When analyzing complexity:

- O(2n) becomes O(n)

- O(n² + n) becomes O(n²)

- O(n + 1000) becomes O(n)

3. Consider Both Time and Space

Don't forget to analyze space complexity alongside time complexity, especially for memory-constrained applications.

Conclusion

Comprehending Big O notation helps with the ability to evaluate algorithm efficiency. It's a cornerstone in optimizing code for various applications that will be performant, scalable and cost-effective.

Understanding these concepts will help you:

- Choose the right algorithm for specific problems

- Optimize existing code for better performance

- Design scalable systems that handle growth efficiently

- Make informed trade-offs between time and space complexity

As you continue your programming journey, remember that Big O notation is not just academic theory—it's a practical tool that guides real-world decision-making in software development.